Why Understanding Digital Body Language is Imperative in Business Today

If you were walking through airport security and noticed the TSA agents had blindfolds on, how would you feel?

Personally, I’d be petrified, as I’m sure you would be.

Now imagine a teacher walking out of a classroom immediately after passing out an exam.

Or a drug agency giving you a ‘do-it-yourself’ take-home drug test.

Or a poker player turning their back on their opponents while the cards are dealt.

Or an interrogator…okay, okay, I’m sure you get the point.

Intentionally ignoring someone’s behavioral cues, especially in a security setting, is ignorant and borderline reckless behavior.

Human body language and behavioral intelligence is absolutely imperative to truly understand someone’s intent.

So why do companies continue to ignore this simple fact and do nothing to fix it?

Blissful Ignorance

Today, with a majority of business interactions taking place online, businesses are blind to their customer’s, employees, and agents behavioral cues.

They may as well put on a blindfold while they go about their day.

But with the advances in A.I., there are readily available predictive analytics solutions, so why are companies actively choosing to ignore this glaring issue?

To summarize some of the common replies we hear:

“Our customers are behind computer screens – the technology doesn’t exist.” Hmm

“Our customers and agents are honest, we’re not worried about them being risky or fraudulent.” Gulp

“We’re still dealing with printers and faxes, we haven’t even digitized our application/claims process.” Yikes, but okay…

“We prefer our current state of blissful ignorance.”

Okay, no one has ever said that last one out loud, but we’re paraphrasing here, alright?

The fact is, there is no good excuse anymore.

So, rather than focus on the past, what should companies do to plug this gaping hole?

I’d suggest implementing the following three Digital Body Language solutions:

- Digital Identity – Voice and Facial Analysis

- Behavioral Biometrics

- Digital Behavioral Intelligence

Use Cases for Digital Body Language Solutions

There is not a simple, one-size-fits-all way to learn about and implement Digital Behavioral Intelligence (DBI).

And before we dive into it, I believe it was Newton who said, “For every action, there is an equal and opposite reaction.”

In the same vein as nuclear energy, A.I. can be used for an infinite amount of good, or it can be used for an equal and opposite amount of bad.

A.I. is rooting itself into every department, process, report, forecast, and business operation today.

While it’s been used primarily for process automation, trend analysis, and forecasting, it’s now being used for identity verification, risk prediction, and fraud prevention.

On the flip side, it’s also being used by bad actors to create fake identities, bypass risk measures, and perform fraudulent activities.

So where does this leave us – is it truly a zero-sum game?

Let’s take a look at how companies today are using Digital Behavioral Intelligence to fight fire with fire.

Digital Identity – Voice and Facial Analysis

Recent headlines have highlighted some of the advancements in facial / voice recognition technology, particularly in China.

They have successfully utilized this technology in a variety of ways- from retail to banking to public transportation monitoring.

Straight out of a scene from Minority Report – Chinese police are using facial recognition biometric devices in major public transportation areas (think trains, buses, and planes) to identify passengers and spot suspected criminals or terrorists.

They have also begun integrating “far-field” voice recognition technology to speed up ticketing lines, “smile to pay” applications at restaurants, and “face as boarding pass” at airports.

I’m okay with that, so far…

Where it gets dicey are some of the other, more intrusive methods of monitoring their population.

Netflix’s Black Mirror, the modern-day Twilight Zone, unveils some futuristic (maybe not so much) applications for this type of technology.

Assigning someone a ‘social credit score’ based on their interactions with others – think Uber rating for everything you do – feels like we’re diving into the dark side of A.I…

“Doing volunteer work, donating blood, and recycling can all boost one’s social credit score, while incurring debt or criticizing the government can render you blacklisted, unable to buy property, take out loans, and now, engage in some forms of mass travel.”

Imagine jaywalking on a Tuesday and being rejected by TSA on a Wednesday. Sheesh.

There is a fine line between creating a ticketless and safe transit operation and violating basic human rights.

I have a feeling this will be hotly debated over the coming years.

Regardless, there are still many current and future benefits of this technology.

Anyone who uses FaceID on their smartphone has already interacted with it.

Companies like HireVue are using Facial Recognition and Analysis to screen job applicants.

Some other way A.I. is integrated into our daily lives is behavioral biometrics.

What is Behavioral Biometrics?

To quote TechTarget – “Behavioral biometrics is the field of study related to the measure of uniquely identifying and measurable patterns in human activities. The term contrasts with physical biometrics, which involves innate human characteristics such as fingerprints or iris patterns.”

Believe it or not, the way you use your iPhone is far different than the way your friend does.

The same way your signature or fingerprint is unique to you, so too is the way you physically interact with a device.

Behavioral biometric verification methods include keystroke analysis, the way you walk, voice ID or the way you speak, mouse use, signature analysis, and cognitive biometrics.

This can be extremely useful when attempting to identify if John Smith is, in fact, John Smith.

Detecting risky or fraudulent account takeovers using ‘continuous authentication’ is a big use case for this technology today.

But what about predicting these occurrences?

Digital Behavioral Intelligence

Imagine you’re a branch manager at an insurance company.

The branch is about to open and outside are 10 people waiting patiently in line to apply for Life Insurance.

Suddenly, someone slips you a note that says 9 of those potential customers are genuine, 1 is highly risky. Your job is to find the 1 risky person.

Now, here’s the rub…

If you treat EVERYONE like they are risky, those customers will turn around and walk away and you’ll lose their business forever.

With so many available options, the balance between security and customer experience has never been more crucial than it is today.

So, what would you do? Where would you even start?

Oh, and I forget to mention…you’re blindfolded.

The fact that most customers are behind a computer screen means this exact scenario plays out for financial service companies every single day.

Now, imagine that same person who slipped you the note slipped you a pair of X-Ray “Risk Detection” goggles.

What would you do?

You’d put them on, walk outside, identify the risky person, and when they begin to apply, further qualify and/or reject their policy before it can cause you harm.

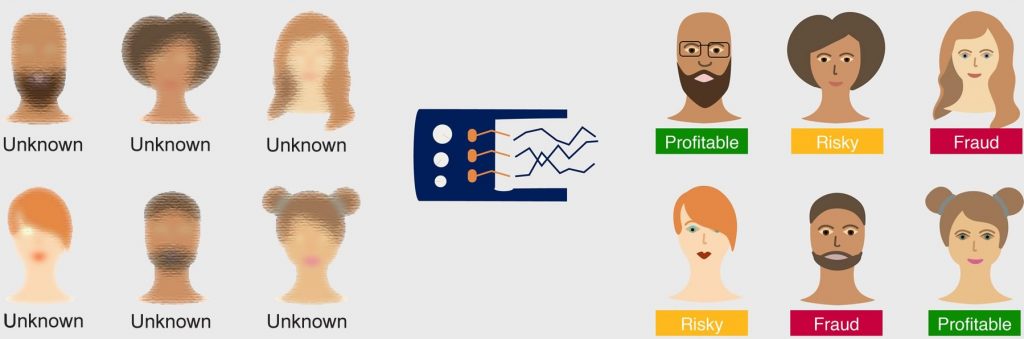

That is the power of Digital Behavioral Intelligence (DBI) and is, in simple terms, how Predictive Behavioral Analytics works.

Rather than comparing John Smith to John Smith, collecting digital behavioral intelligence on all of your users allows you to compare John Smith against every other customer who has ever walked through the door.

Using an already determined outcome – i.e. a risky policy, fraudster, or delinquent payer – a company can apply predictive analytics to their behavioral intelligence to predict the likely outcome of a new user.

Going back to the risk-goggles analogy – having analyzed thousands of other risky customers, spotting the risky applicant by their ‘Digital Body Language’ is relatively simple.

Predictive Behavioral Analytics (PBA) uses machine learning to analyze users Digital Body Language, figures out good-user (and bad-actor) behavioral norms, identifies deviant behavior and key risk signals such as copying/pasting sensitive information or changing/correcting answers, and then predicts, in real-time, what the likely outcome of a user is.

Armed with behavioral ‘x-ray goggles’, or the Digital Polygraph as we call it, companies can finally predict potential risky applicants and fraudsters before they are customers.

Why wait for something bad to happen when you can prevent it from happening in the first place.

In summary

There are no shortages of use cases for the A.I. solutions available today. Still, it is up to the companies themselves to assess their internal needs and goals, and then actually implement the solution.

Digital behavioral intelligence collection methods such as facial recognition, behavioral biometrics, and applied predictive behavioral analytics are a great way to get started.

At this point, they’re available, affordable, and have a great return on investment.

And, as always, we’re here to help and happy to discuss your current or future Digital Behavioral Intelligence initiatives! info@formotiv.com. Book a free demo with ForMotiv today!